There’s a version of the future that doesn’t arrive with explosions or warnings.

It arrives quietly.

In the background.

In systems we’re told are there to help us.

And by the time most people notice what’s changed…

It’s already too late.

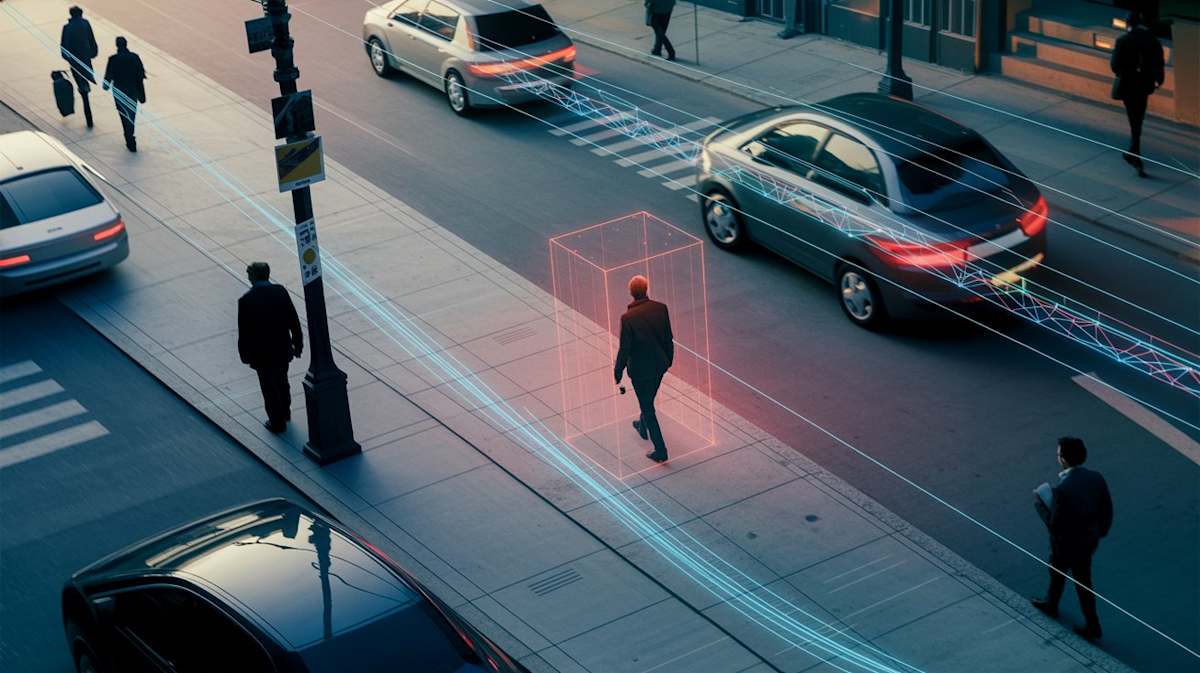

Right now, artificial intelligence is being trained to do something that used to belong only to science fiction:

Predict human behavior before it happens.

Not in theory.

In practice.

Across the world, systems are being developed and tested that can:

- Analyze patterns in movement and behavior

- Flag “anomalies” in real time

- Identify individuals as potential risks

Search interest in terms like AI predictive policing, surveillance technology, and pre-crime systems has surged in recent years.

Because people are starting to realize something uncomfortable:

This isn’t about solving crimes anymore.

It’s about preventing them.

The Promise Sounds Reasonable

The argument is always the same.

If we can stop violence before it happens…

Why wouldn’t we?

If an algorithm can identify a threat early…

Why wait for harm?

On the surface, it feels logical.

Responsible, even.

Until you look a little closer.

The Problem No One Wants to Say Out Loud

Every system has a margin of error.

Even the most advanced AI models.

Let’s say a system is 90% accurate at predicting dangerous behavior.

That sounds extraordinary.

But it also means something else:

1 out of every 10 people flagged by the system is innocent.

Not suspected.

Not investigated.

Flagged.

In a large population, that number doesn’t stay small.

It becomes thousands.

Lives interrupted.

Decisions made by something that doesn’t understand context—only patterns.

And Once a System Like This Exists… It Expands

This is where things tend to follow a familiar pattern.

A system is introduced for a specific purpose.

Then it proves useful.

Then it becomes necessary.

And eventually…

It becomes permanent.

History is full of examples where tools introduced during uncertainty never disappeared.

They scaled.

They integrated.

They became part of the infrastructure.

Not questioned.

Just… accepted.

The Real Danger Isn’t Control. It’s Trust.

The most unsettling part isn’t that these systems could exist.

It’s that people might trust them.

Because once a system is trusted to make decisions…

Those decisions stop being challenged.

They become automatic.

And when something goes wrong, it’s harder to point to a person.

Because there isn’t one.

There’s just the system.

This Is Where the Story Begins

This question became the foundation of my technothriller The Zero Index.

But before a system like that takes hold…

There’s always a beginning.

A moment where everything still feels normal.

Where people believe they’re choosing safety.

Where no one realizes what’s being built underneath it all.

A City Celebrates Peace. Then Everything Changes.

That moment is explored in a free prequel:

The Last Day of Harmony

It starts with a city celebrating 100 years of peace.

A milestone.

A success story.

But behind the scenes, something new is being introduced.

A system designed to optimize safety.

To prevent disruption.

To ensure that nothing ever threatens that peace again.

By the end of the day…

The system is live.

And nothing will ever be the same.

Read the Free Prequel

If you’re drawn to stories about:

- AI surveillance and predictive systems

- Pre-crime technology and ethical tradeoffs

- Dystopian futures grounded in real-world trends

- Psychological thrillers about hidden systems

You can download the prequel here:

👉 Download The Last Day of Harmony (Free): https://books.plot-studios.com/the-last-day-of-harmony

Before You Go, Consider This...

If a system could predict violence with near-perfect accuracy…

But sometimes it was wrong…

Would you accept it?

Or would you wait until it made a mistake with you?